IstoVisio (syGlass) inc.

Boosting Efficiency by 41% Through Voice Command Integration

syGlass develops high-performance 3D and VR visualization tools that enable researchers and scientists to explore complex volumetric data with clarity and ease. The software optimizes data analysis workflows, allowing for seamless interaction with large-scale datasets like microscopy scans and medical imaging in an immersive, intuitive environment.

PROBLEM

syGlass users (scientists and HCPs), spend excessive time on routine tasks due to repetitive, multi-step processes.

SOLUTION & IMPACT

Integrating voice commands into multi-click operations improves efficiency by minimizing workflow interruptions.

By implementing this solution, the average task completion time decreased from 12 seconds to 7 seconds per action, resulting in a 41% boost in efficiency.

COMPANY & INDUSTRY

syGlass | Software Development

MY ROLE

Lead Product Designer

TEAM

Lead Engineer, Engineer

TIMELINE

September - November 2024

SPEECH RECOGNITION SYSTEM

Whisper AI (Open AI)

BEFORE & AFTER WORKFLOW

BACKGROUND & DISCOVERY

During Q3 development planning, I reviewed customer feedback and support tickets and noticed a common issue—users were frustrated by repetitive menu-based tasks.

Some users suggested adding a Siri-like voice assistant to make things easier. This led me to explore voice command integration to speed up workflows and reduce friction.

Challenges:

Scalability & Privacy: A full voice assistant wasn’t possible due to limited resources and privacy concerns.

Technical Limitations: Continuous voice listening was difficult to implement.

Usability & Adoption: Users needed a simple and easy-to-learn voice command system.

To solve these problems, I designed a voice command system that lets users complete tasks without continuous listening. This improved efficiency while keeping user privacy intact.

RESEARCH

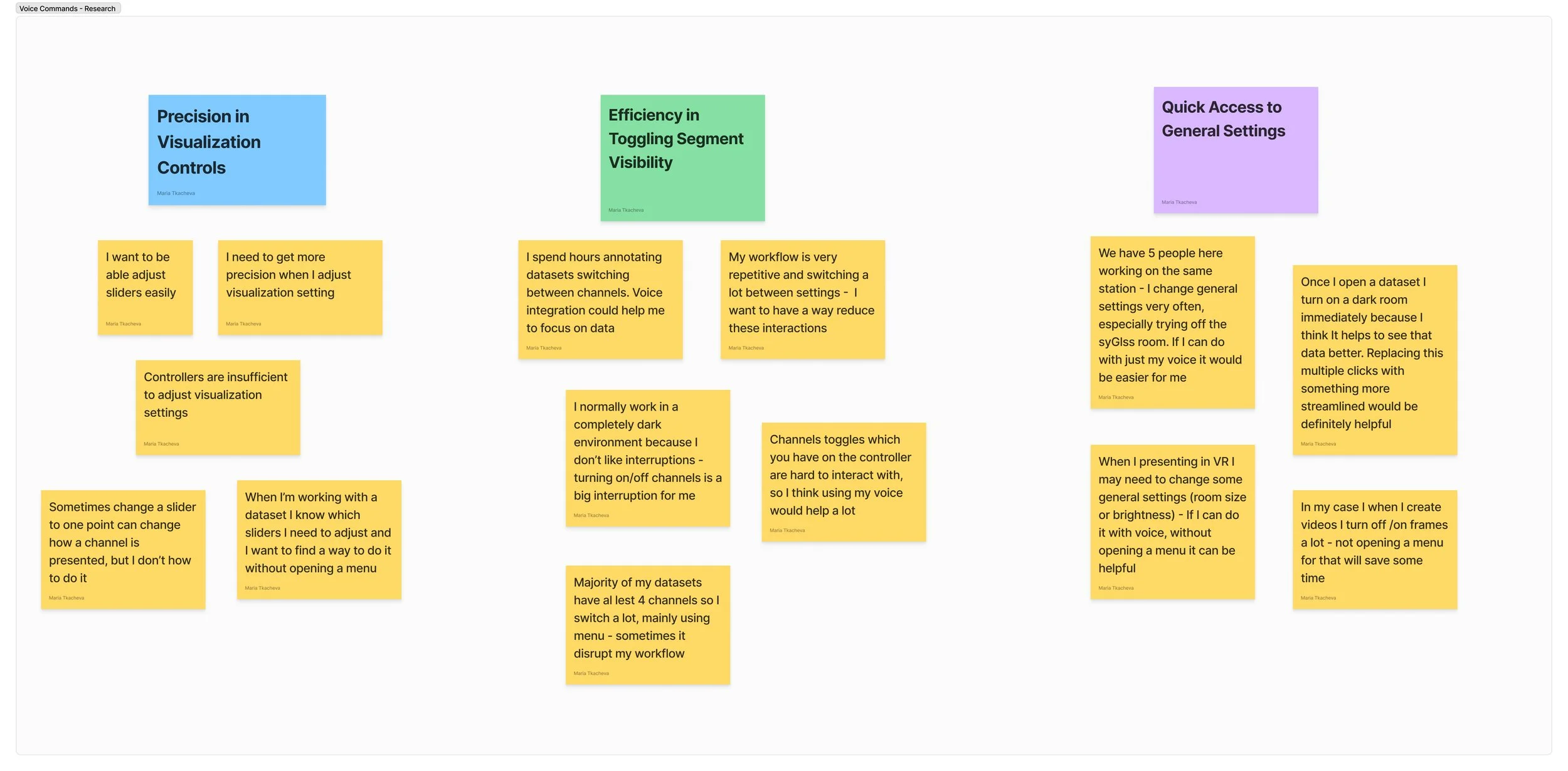

Validating Voice Commands

To ensure voice commands truly improved workflows, I conducted in-depth interviews with eight scientists across Europe and the U.S. These sessions helped identify key pain points and opportunities where voice commands could simplify tasks, enhance usability, and integrate smoothly into existing workflows.

Key Pain Points

Repetitive Clicks → Multi-step interactions interrupted workflows.

Opportunities for Voice Commands

More Precise Visualization Controls → Allows for accurate 3D model manipulation, reducing errors and improving efficiency.

Easier Segment Visibility Toggles → Reduces multi-click actions by enabling seamless visibility adjustments via voice commands.

Faster Access to Settings → Provides instant access to frequently used settings, eliminating unnecessary clicks.

These insights shaped the design direction, ensuring voice functionality directly addressed user pain points while improving efficiency.

Understanding Challenges

To make voice commands intuitive and effective, I identified key challenges across user groups and implemented targeted solutions.

The table below highlights adoption barriers and the design solutions that address them.

IDEATION

Establishing Feature Priorities Based on User Insight

Through user interviews, I uncovered a key frustration: too many clicks in general settings and segmentation controls slowed users down. They wanted a more seamless way to manage settings and fine-tune visualizations.

To streamline workflows and improve efficiency, I proposed integrating voice commands for key actions:

General Settings → Enable, disable, increase, or decrease with simple voice commands (e.g., “Turn on dark mode”).

Precision Controls in Visualization → Use numerical inputs to refine adjustments (e.g., “Set opacity to 0.02”).

Segment Visibility → Instantly toggle channels on and off (e.g., “Hide Channel 2”).

By replacing multi-click actions with intuitive voice interactions, we reduced friction and improved usability, making workflows faster and more efficient.

Designing the Whisper AI Experience

With a clear understanding of user needs, I researched best practices from Amazon Alexa, Meta AI Assistant, and Google Assistant to inspire new interaction models.

To shape the Whisper AI user experience, I mapped a high-level user flow, identifying key moments where accuracy and seamless adoption were critical. This approach ensured an intuitive and efficient interaction model tailored to user expectations.

DESIGN

Refining the Workflow & Validating the Solution with Engineers

Building on the high-level user flow, I developed a detailed workflow to address key challenges and ensure seamless integration.

To validate feasibility, I collaborated with engineers to discuss technical constraints and refine the solution.

DESIGN

Final Implementation

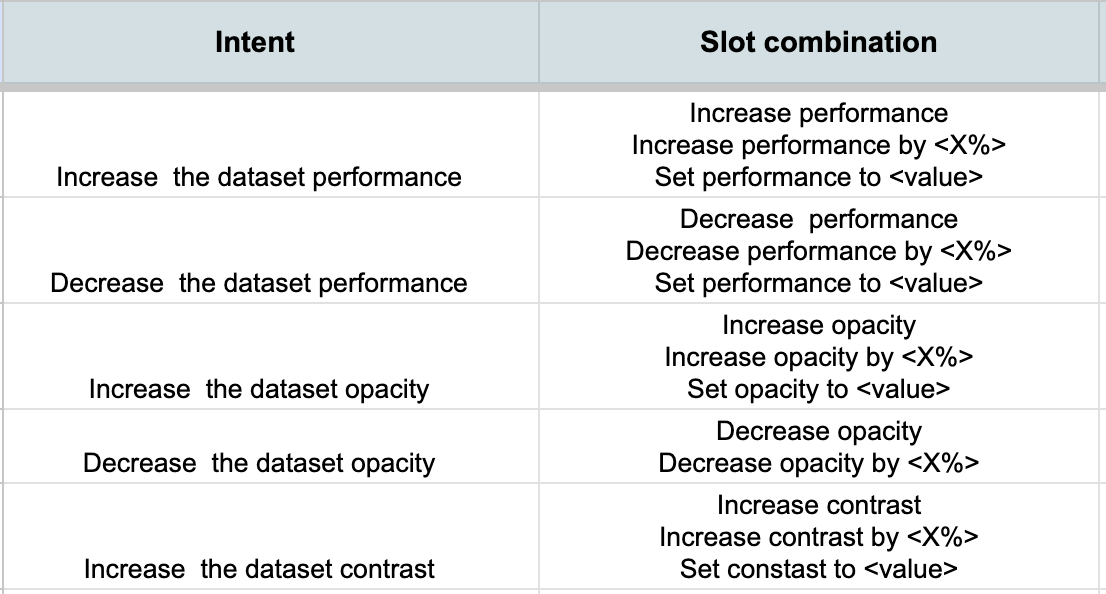

After validating the design, I transitioned to final implementation and developer documentation, ensuring smooth integration. Key design considerations included:

Voice Command Flexibility → Allowed multiple command variations to improve recognition, especially for non-native speakers.

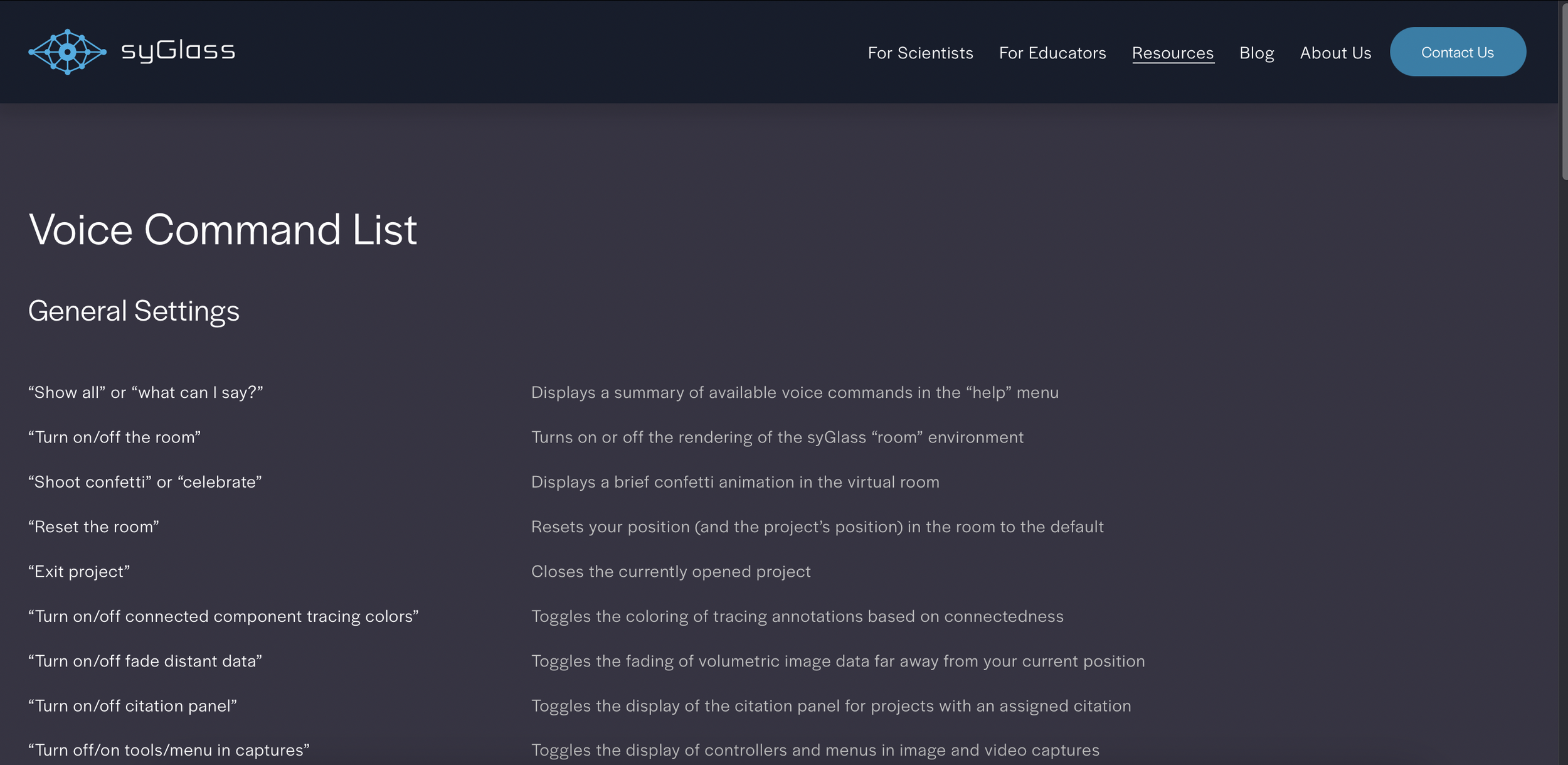

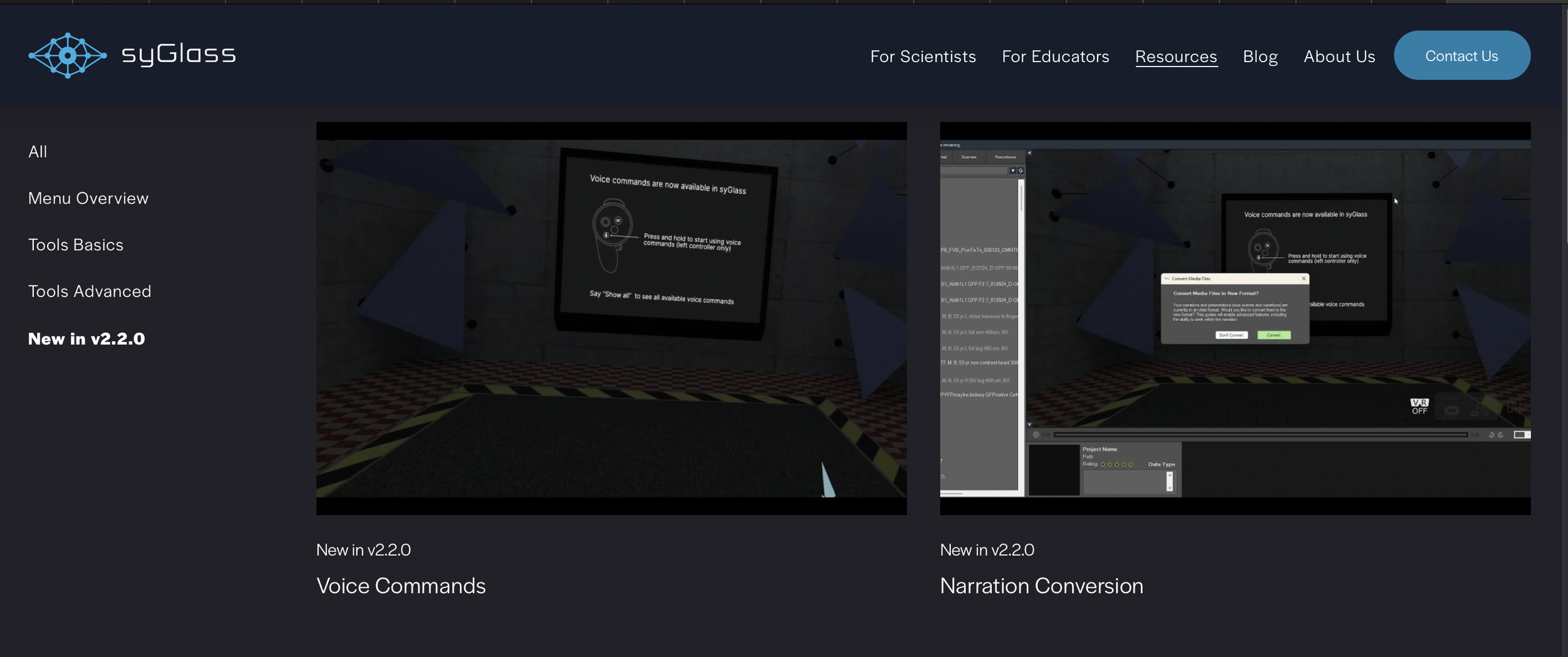

Enhancing Learnability → Organized commands into clear categories, integrated in-VR references, and designed interactive tutorials for seamless onboarding.

Improving Error Recovery → Added alternative suggestions and a “What can I say?” prompt to guide users and reduce frustration.

By focusing on usability, adaptability, and accessibility, this iterative process ensured the voice system felt intuitive and frictionless for all users.

Challenge 1

Challenge 2

Challenge 3

How can we introduce Voice Command Flexibility to support users with varying levels of English proficiency and accents?

Designed a multi-variant voice model that supports alternative command phrasing. Each command allows at least three variations to accommodate different speech patterns.

How can we promote learnability to help users understand and effectively use the structure of voice commands?

Implemented a structured command system categorized into toggle vs. slider actions. Added:

In-VR command reference guide for quick lookup.

Onboarding tutorial for first-time users.

Comprehensive web documentation for advanced commands.

How can we help users recover from errors while keeping them motivated to continue using voice commands?

Integrated an adaptive feedback system where:

The AI suggests alternative recognized commands when errors occur.

Users can invoke a help function, “What can I say?”, to see available options.

Built-in confirmations ensure clarity before executing irreversible actions.

REFLECTION

Impact & Next Steps

By implementing this solution, the average task completion time dropped from 12 seconds to 7 seconds per action, improving efficiency by 41%.

Within the first three months, adoption reached 38% of all users, including many non-native speakers, leading to increased engagement and smoother workflows.

Next Steps:

Accent Recognition – Improve algorithms for better accuracy across diverse accents.

Performance Analysis – Evaluate usage across demographics to refine functionality.

Feature Scaling – Expand capabilities with advanced and customizable commands.

This project marks a critical first step toward integrating voice assistance into syGlass, paving the way for scalable, AI-driven interactions in spatial computing.